Disclaimer: This conference topic chooses to study the legacy of id Software’s liberated code from a perspective that has been neglected by studies: what can be improved. id Software’s legacy has deeply changed the landscape, code liberation has fueled both academia and a lively passion in many people, and our Unvanquished game itself would simply not exist without this legacy. Thus, nothing should be taken away from the gratitude for the various actors who have made this possible. However, beyond focusing on what is very good, it seems interesting to also study what is less good, because it is often less success than failure that makes it possible to learn and surpass oneself.

Disclaimer: This conference topic chooses to study the legacy of id Software’s liberated code from a perspective that has been neglected by studies: what can be improved. id Software’s legacy has deeply changed the landscape, code liberation has fueled both academia and a lively passion in many people, and our Unvanquished game itself would simply not exist without this legacy. Thus, nothing should be taken away from the gratitude for the various actors who have made this possible. However, beyond focusing on what is very good, it seems interesting to also study what is less good, because it is often less success than failure that makes it possible to learn and surpass oneself.

This talk features personal opinions and makes use of colorful (if not doom-esque) metaphors.

Watch the video on Youtube (with English and French subtitles) or on Debian Peertube (you can also download it):

Preamble

From November 19th to 22nd, 2020, Debian organized an online “MiniDebConf” with video game as main theme, and nattie invited the Unvanquished project to submit a talk. As an active member of this project I proposed the following topic:

Building a community as a service: how to stop suffering from “this code is meant to be forked”.

And here is the presentation:

Earlier id Tech engines were well known to have seen their source code opened when they were replaced and thus unprofitable. While this was a huge benefit for mankind, game developers still suffer today from design choices and mindset induced by the fact such code base was meant to die. 20 years later we will focus on id Tech 3 heritage, how both the market, open source communities and development practices evolved, and embark in the journey of the required transition from dead code dump to an ecosystem as a service.

Starting with various examples from the video game industry, the conference is the result of fifteen years of observation and immersion, and is developing a broader reflection on the nature of a service, the need to develop communities, the place of collaboration in an open source community, how design choices can induce a mindset that feeds design in turn, etc. Some issues are addressed such as the (possibly hidden) cost of certain practices, the nature of an economy, or how certain methods more readily encourage the production of waste or else the recycling of production.

This is a full transcript of the lecture that was given at Debian MiniDebConf Gaming Edition on November 22, 2020.

Thanks to Debian for hosting and organizing the event and to Thomas Vincent from Debian France for transforming the transcripts into subtitles and the tedious work of synchronization, as well as his meticulous work of proofreading and correcting transcripts and translation.

Unvanquished: Building a community as a service

Hi, I’m Thomas Debesse from the Unvanquished game project. I’m part of the project head and contributor to various related projects. Unvanquished is a science-fiction game mixing real-time strategy mechanisms with a first person shooter point of view. Unvanquished project is meant to provide a fully open source game from code to data.

Outside of free software development, I’m more into the sysadmin and site reliability engineering, or I would say, site rehabilitation engineering. That makes Debian an important part of my job and I’m convinced one of the first Debian service after its community is a work methodology. When your job is to provide infrastructures, software itself becomes less important than the way you use it, and Debian is defining ways to solve problems and builds a mindset to think about them.

Because what I do is to make others able to do their own work, I know the first meaning of a service: an act of being of assistance to someone. I believe it’s important to replace this word in our jobs, a service not being a software function, not being a subscription to some economic industry, a service is an act of being of assistance to someone.

The game engine powering the Unvanquished game is named the Dæmon engine, itself being the grand-grand child of many forks including Quake 3.

Projects based on opened id Software code are experiencing many forks. We will investigate the reason why this problem not only lives on the human side of people, but how the code design induces this behaviour. We will try to understand why some projects manage to grow up communities and others don’t, and what can be done to limit the waste of resources and take care of human’s efforts, which is about taking care of humans themselves.

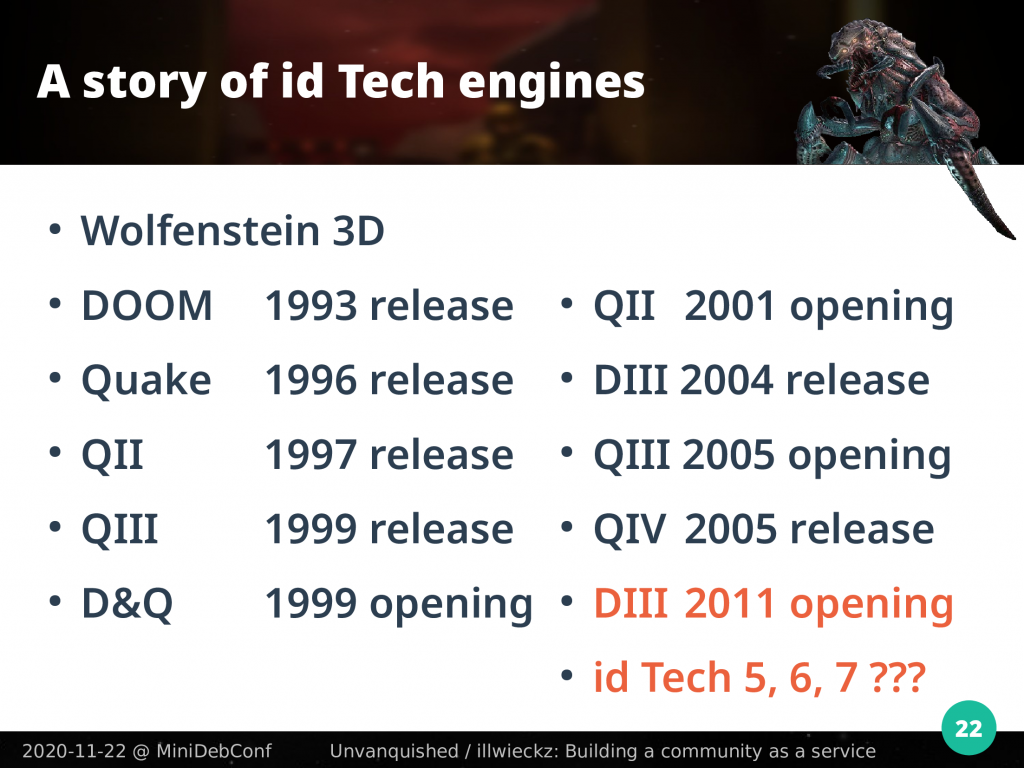

A story of id Tech engines

To begin with, we will look at id Software games history.

Following the success of Wolfenstein 3D, Doom was released in 1993 and its source code opened in 1999. That engine is usually called id Tech but the “engine” word does not fit actual meanings and names like id Tech 1, 2, 3 and others are part of a retroactive effort to name id Software snapshots of code in a way they look like products done by other companies.

There were never such things like id Tech engines at the time. There was the DOOM engine, the engine shipped with DOOM. Then, some derivatives were made from it, which we call forks.

Quake was released in 1996 and while DOOM was an important milestone in first person gameplay, Quake was an important milestone for 3D environments. In 2020 a game like Xonotic still uses an engine based on and compatible with Quake the first while providing a competitive scene and present-day gaming experience.

id Software games coming after Quake are iterative improvements over Quake, same for tools. Engines shipped with new games are snapshots of the source tree and those games are forks.

Quake II was released in 1997. While Quake and Quake World code were opened in 1999, Quake II code was opened in 2001. Those three Quake forks are retroactively called under the same id Tech 2 name.

Quake and Quake II snapshots were sold and were used by third-parties as a base for games and series like Kingpin, Soldier of Fortune or Half Life.

As a company, id Software was basically selling two things: games, and code snapshots.

We can say some snapshots of id Tech 2 were opened, but it does not make sense to say id Tech 2 was. id Tech 2 is only a convenient approximation to classify various things that are close together, this does not name a tangible product.

Thanks to the opening of the code some community open source projects emerged like Nexuiz and its successor Xonotic, Warsow, AlienArena, UFO: Alien Invasion, DDay: Normandy, the upcoming Quetoo, etc. We may even see commercial releases based on the opened code like the upcoming Wrath: Aeon of Ruin by 3D Realms which uses the DarkPlaces engine like Xonotic.

id Software released Quake III Arena in 1999. The code snapshots are retroactively named id Tech 3. id Software opened the Quake 3 snapshot, the Return to Castle Wolfenstein snapshot and the Wolfenstein: Enemy Territory snapshot. Another company opened two Jedi Knights snapshots.

That Quake 3 source was successful and used in a lot of well-known franchises like ones we just quoted but also the Star Trek Elite Forces series, Soldier of Fortune again, Medal of Honor, some James Bond games, Resident Evil, Call of Duty and others.

In 2005 the code of Quake 3 was made open source when it was obsolete from an economic point of view. Older codes were opened by id Software when another code was marketed instead.

This opening allowed multiple open source games, or games with open source code, to become autonomous things. We can name Tremulous on which Unvanquished is based on, World of Padman, Smokin’ Guns, OpenArena and others. Urban Terror was distributed with the open source Quake 3 engine but game code was still proprietary.

Doom 3 was released in 2004 and its code opened in 2011. As usual snapshots were used in established franchises like Doom, Quake, Enemy Territory and Wolfenstein, but less snapshots seem to have been sold to third party companies. The opening of the Doom 3 code gave birth to less open source projects but we can name The Dark Mod which built a viable product.

We can yet again notice the confusion between the engines used by all those games and the ones that were opened, on the English Wikipedia page on Quake video game series we can read:

Quake 4 was […] using the Doom 3 engine.

But you can’t run Quake 4 with the Doom 3 closed source engine, neither can you with the open source one. Those engines are incompatible forks.

The Doom 3 open code was covered with a different license than the previously opened codes. Looking at Quake 3 and Doom 3 derivatives with open code, we can see projects having GPLv2 only license, GLPv2 or later, and GPLv3. So, it may be complicated to exchange code.

In this case copyleft licenses seem to be a way for involved companies to keep control of the source code, like a non-commercial variant but with FSF and OSI seal of approval. It has the drawback of limiting what the community can do and eventually makes less possible for people to collaborate together, exchange code, backport fixes and merge branches.

The engine from id Tech 5 era used by the Rage game was not really used outside a handful of games including some Wolfenstein titles and a few others. The id Tech 6 and 7 generations engines seem to have not been used outside of existing Doom and Wolfenstein games and to not have been marketed as far as I know. And with Quake Champions they started to use third-party code. At this point it looks like you can’t make a game anymore with id Software technologies from today.

Competitor: Epic and Unreal engine

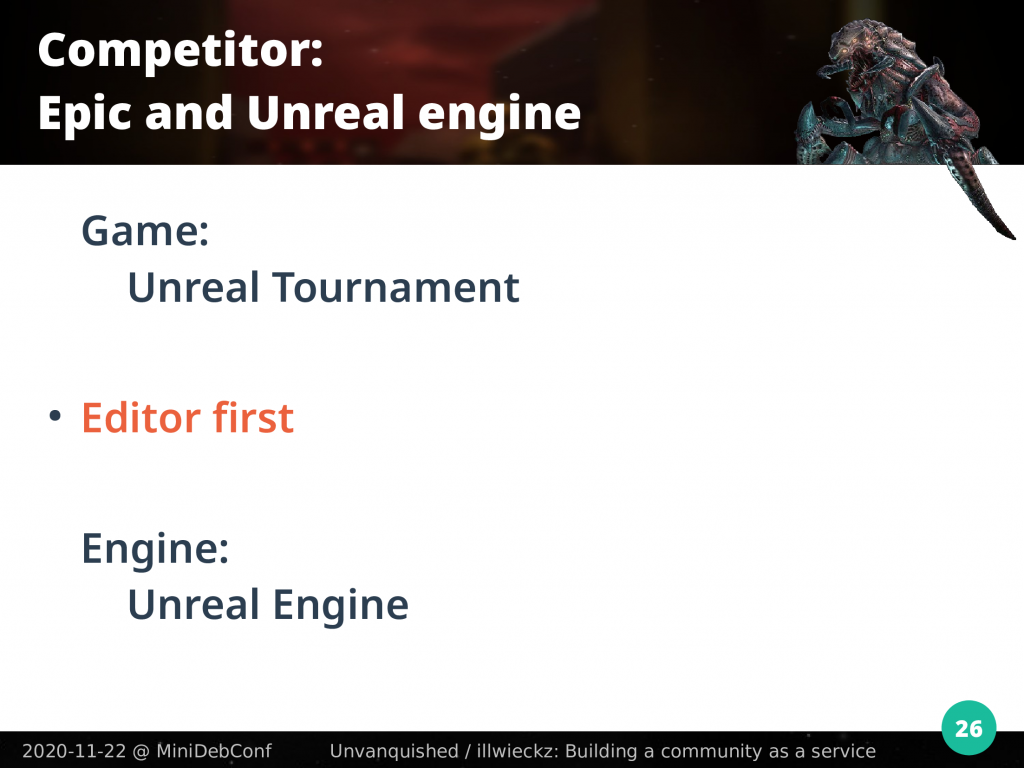

One well-known competitor of id Software was Epic Games. They released Unreal Tournament in 1999, almost at the same time Quake III Arena was.

Epic Games and id Software games attracted a large community of modders, people doing extra levels, implementing gameplay modifications and more. As time passes we can notice differences among the way those modifications were taken in account.

If you look at id Software code, you’ll notice a large amount of things making it possible for you to modify and extend the game, and make new games from it. I say the design “makes modification possible” and I don’t say it is designed for modifications. Some things in id Software code are thought to make modifications an easy thing but when you deal with them you discover they make possible to do something new but not expect the modification to be made by you.

Epic and Unreal started with editors. Tim Sweeney’s first game ZZT evolved from a text editor. That game from 1991 was distributed with a level editor and still has an active modding scene. I’ve heard developers at Epic Mega Games were spending if not wasting their work time playing Doom so to make them more efficient in their work, Tim Sweeney wrote them an editor. For what I know, that editor predated Unreal and predated Quake.

The first step Epic achieved to make Unreal Tournament compete with Quake III Arena before Quake 1 was announced was to make an editor.

After the release of Unreal various game developers asked to use the engine. The source code seems to have been quickly designed to be reusable, same for the editor.

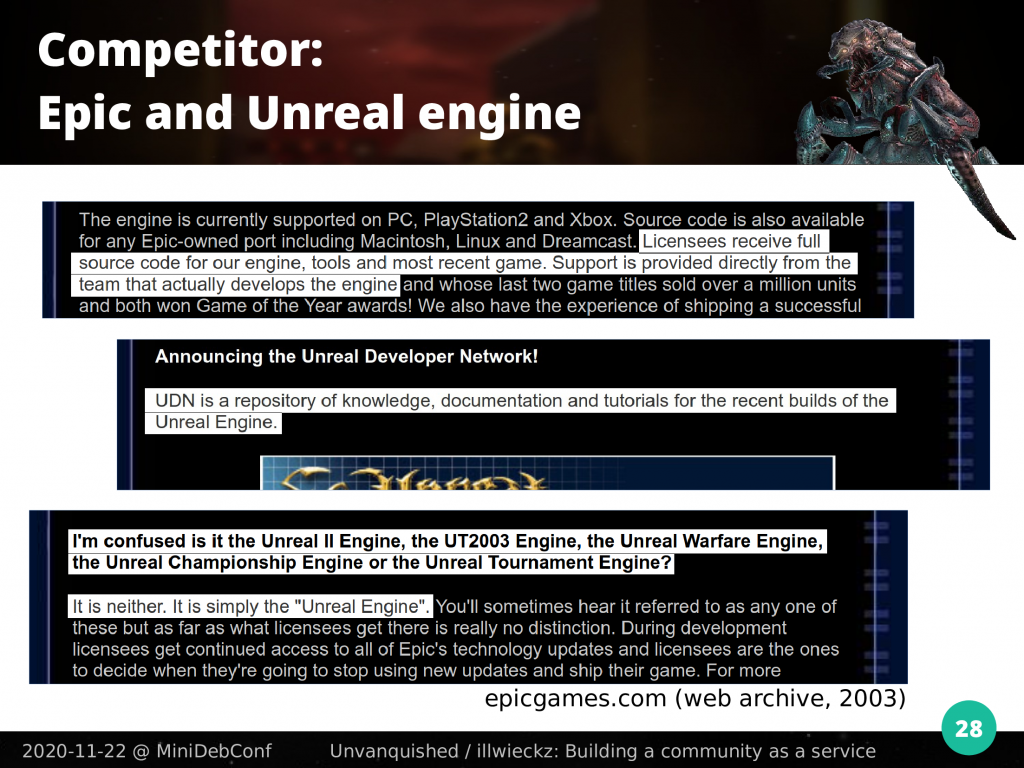

If we look at this forum thread from 2003 on gamedev.net, we can see one guy saying:

I’m pretty sure that thing like “Unreal Engine” doesn’t exist. The engine used in all unreal games is optimized and changed for each new Unreal game.

And someone else quotes the Unreal Developer Network website at the time, saying:

The Unreal Developer Network is a group of sites and services providing support and resources for licensees employing the latest builds of Epic Games, (builds 600+).

Then the people continue saying:

The engine is constantly under development […] and is only feature frozen on game release (if you license the engine from Epic you can get updates to the engine all the time).

The Epic Games website said at the time about the Unreal Developer Network:

Licensees receive full source code for our engine, tools and most recent game. Support is provided directly from the team that actually develops the engine […] UDN is a repository of knowledge, documentation and tutorials for the recent builds of the Unreal Engine.

On the question “is it the Unreal II Engine, the UT2003 Engine, the Unreal Warfare Engine, the Unreal Championship Engine or the Unreal Tournament Engine?”, the answer was clear:

It is neither. It is simply the “Unreal Engine”.

Of course that’s part of a communication effort, but while we see the workflow of snapshots being distributed like id Software did, it reveals a common trunk, or what we may call it today, a master branch. They already talked about a “build”. That means the engine must be repurposable without requiring from the game developer to rebuild it.

Today Unreal Engine is one of the most popular game engines, or game framework like people said at the time. Maybe that Unreal Engine was the cause of that vocabulary slip.

Epic built a community of game developers around their technologies, they provide reusable tools and editors, and they now focus on making artists and developers meet together. This is made with community in mind. Epic’s first product is its service, and the community is a service itself.

While not being free, Unreal Engine sources are made available and are meant to get contributions back from game developers. This is about software projects being alive.

Competitor: Valve and Source engine

While we can expect big game projects to use custom branches of the engine, with time it becomes an evidence that one of the first service to provide to game developers is a reusable build.

Let’s now focus on Valve. Valve got some snapshots of Quake and Quake II, and when they got it in hand they called it gold. They called the source tree “Gold source”. They released Half Life on the engine they built from it and later, the same way Epic Mega Games removed the “Mega” adjective to focus on “Epic”, Valve removed “Gold” to just focus on “Source”.

Source engine by Valve is meant to be reusable. You can install the Source 2007 SDK in Steam and install the GoldenEye: Source game. Developers don’t have to build the engine themselves. The Source engine is not a template, you don’t have to fork, build and maintain it yourself.

Valve provides tools like the Hammer level editor and the map compiler. Those tools are maintained by Valve and designed to be reusable. Mappers don’t have to rebuild the tools.

It looks to not have been that obvious on id Software side.

With Steam, Valve built a software distribution. So, they had to design their software in a way it can be distributable in a repository, they solved file duplication and feature fragmentation, they solved dependencies and made it possible for various teams to have their own release cycle while depending on each other. Outside of legal and ideological issues, maybe we can technically make Debian packages for both the Source engine and games. At least, some of the issues were fixed, Valve had to.

Valve ports their own previous games to updated versions of the engine. They don’t see old code as a dead code, and they have to solve specific problems you get when porting games. Their designs must not prevent ports from happening.

While those products are closed source we see they solved some issues we face as an open source community. Valve’s first product is its service and the community is a service itself, this is about software projects being alive.

Those services induce methodologies like Debian as a software distribution has to build methodologies.

On open source side of things: Godot

On the open source side, Godot is a free and open source ecosystem that competes with engines like Unreal, Unity or Unigine, but don’t compete with id Tech for a simple reason: Godot is meant to be reusable from the start and development is meant to get contributions back to the main branch. By design Godot is not meant to be a game by itself but the underlying technology for your games. The product is not the game, but the tools. This perspective introduces interesting problems to solve.

As a game developer, you download a pre-built version of Godot and there you go. Godot is meant with community in mind, built as a free open source and community software.

id Software engines, editors and tools were templates

Unlike the others I just quoted, id Software closed or opened codes were game templates.

When you make a game based on id Software technologies, you have to fork an existing tree and maintain it yourself.

id Software was selling source code snapshots as templates, and as a developer you had to do required modifications so it is not anymore meant for another game but yours. This is called “instantiation”.

There are some limited features to run a “total conversion” mod on existing games, but you can’t take a build of the engine and just package it with your game to make a standalone game that runs without the original one.

You have to make some required edits and set up the infrastructure to rebuild the engine yourself. This produces a “code induced mindset”.

Because the code itself is designed to be forked, when you work with it, if you don’t try to think out of the box and make special efforts to go against what the code induces to your mind, you start to think the only way to do things is to fork without contributing back. If you ask “why do I have to fork?”, some people may answer you like if you asked a silly question if they don’t simply say “it was always done this way”.

To prevent this on engine side some redesign is required.

To fix the fork mindset on the game code itself, the easier would be to clone an upstream repository and facilitate merging back and forth. Older version control systems like Subversion are also known to make collaboration more costly than forking so using tools inducing good practices like Git is a good idea.

Since id Software codes were opened because of being abandoned, outside of a few projects like ours you can’t hope for an upstream.

On the game code side it’s also possible to split parts of the code as reusable libraries. We’re not considering it for Unvanquished yet, but we ditched the existing historic user interface code for a third party one we don’t maintain ourselves, and for other parts we introduced some code generation mechanisms.

File formats meant to be forked

Let’s look at the BSP file format. BSP is the name of a technique to store spatial information for the game level as a 3D model. We will not focus on that technique but on the file with a .bsp extension that is used to store such information and other things.

You can see a BSP file as a primitive tarball: a container for multiple files the game engine loads. That can be the 3D model itself but also other things like pictures. A BSP file starts with a header with magic numbers, like the “IBSP” string for id Software games, “RBSP” for Raven games or “VBSP” for Valve games. Then a version number. After that you have to expect everything else to be undocumented and specific to every fork on earth.

There is an array for subfiles entries with their offsets and sizes, but there is no number to tell you how many subfiles there are. So, you cannot extract subfiles from other forks.

Imagine every id Tech-based game use its own tar format, and if your game needs to ship one more file, it is required you fork the tar format and create another undocumented variant.

At first the problem does not look that problematic because it’s expected from you to fork the level editor, to fork the map compiler which produces the BSP data, and to fork the raytracer to render light maps. Everything works in your closed ecosystem that never exchanges with the world.

Things become hard when you need to get out of that mindset. SomaZ from the OpenJK project is writing a Blender plugin to load BSP files and bake lightmaps using the Cycle raytracer. No one wants to fork this plugin for every game, so in the end such a plugin would have to implement your own game-specific variant if you want to use it.

When you’re a proprietary game publisher from the 90’s burning games on CD ROMs that would never be updated, you don’t care. But even if id Tech games were produced with the PC market in mind, when you’re dealing with id Software open technologies you’re applying methodologies that fit perfectly if you target console cartridges.

The Unvanquished game uses the Dæmon engine which is a fork of the XreaL engine which is a fork of the opened Quake 3 engine. To introduce new features and to solve problems, XreaL had to extend the BSP format. This engine had code to load both the new format and the original one, but because of the way formats and engine were designed you had to recompile the engine with specific defines to enable support for one format or another, without being able to support both at the same time. For Unvanquished and Dæmon we currently gave up on those enhancements to not lose compatibility with third party software and existing assets, but that means we have to workaround issues that have known fixes we don’t use yet.

Editors meant to be forked

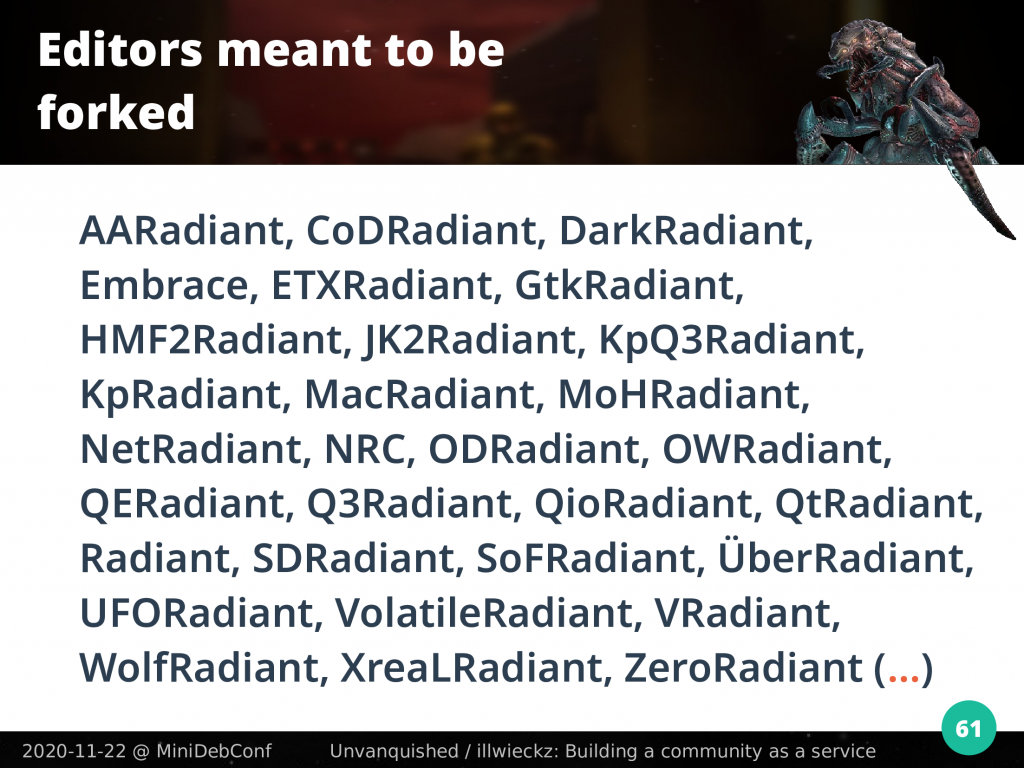

At Quake time there was an in-house level editor named QuakeEd, at Quake 3 time the editor was named QERadiant then Q3Radiant, from then you get CoDRadiant for Call of Duty, MoHRadiant for Medal of Honor, you get it…

Like the engine, the level editor had to be forked, instantiated, edited to fit your specific game, built and maintained by the game developer. The same went with map compiler tools.

Here is my own personal list of known Radiant instances for various games. Expect a long list too for map compilers and ray tracers.

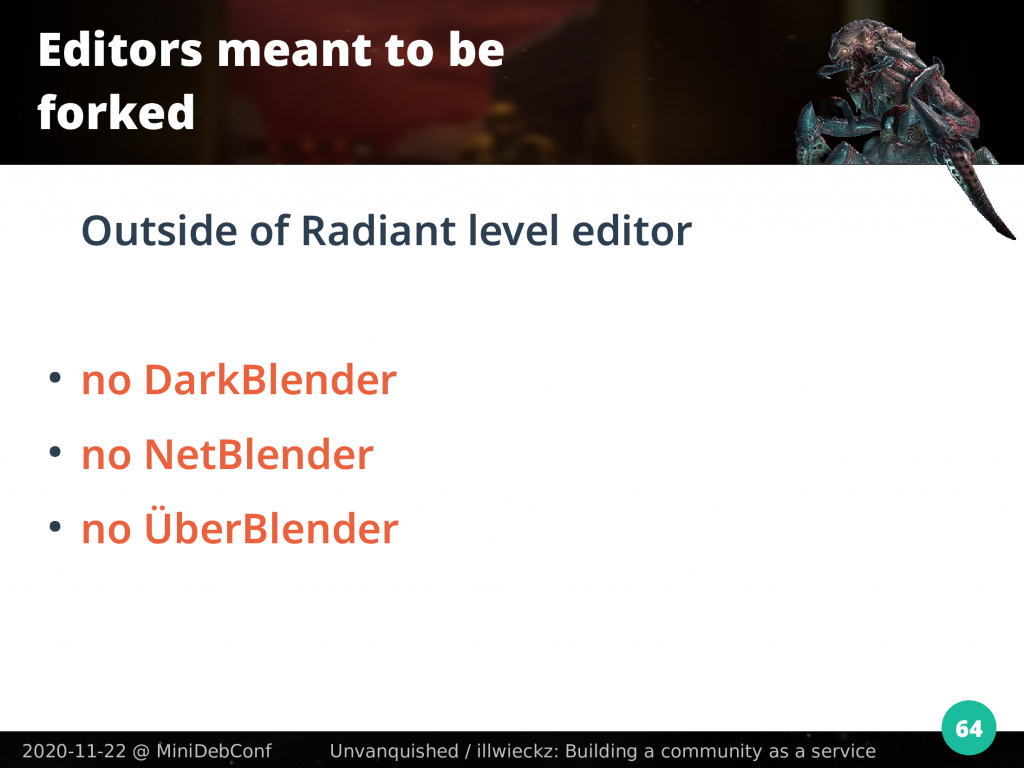

When you look outside of that id Software heritage, there is no DarkBlender, no NetBlender, no ÜberBlender… but when you’re dealing with id Software technologies, you have to. This is not only because the open code is dead, unsupported, and people don’t know how to collaborate together. The reason is it was meant to be that way: developers paying id Software at the time had to fork and do the editor maintenance and publishing work. Splash Damage had its own fork at the time, Raven had one fork per game and that’s a lot.

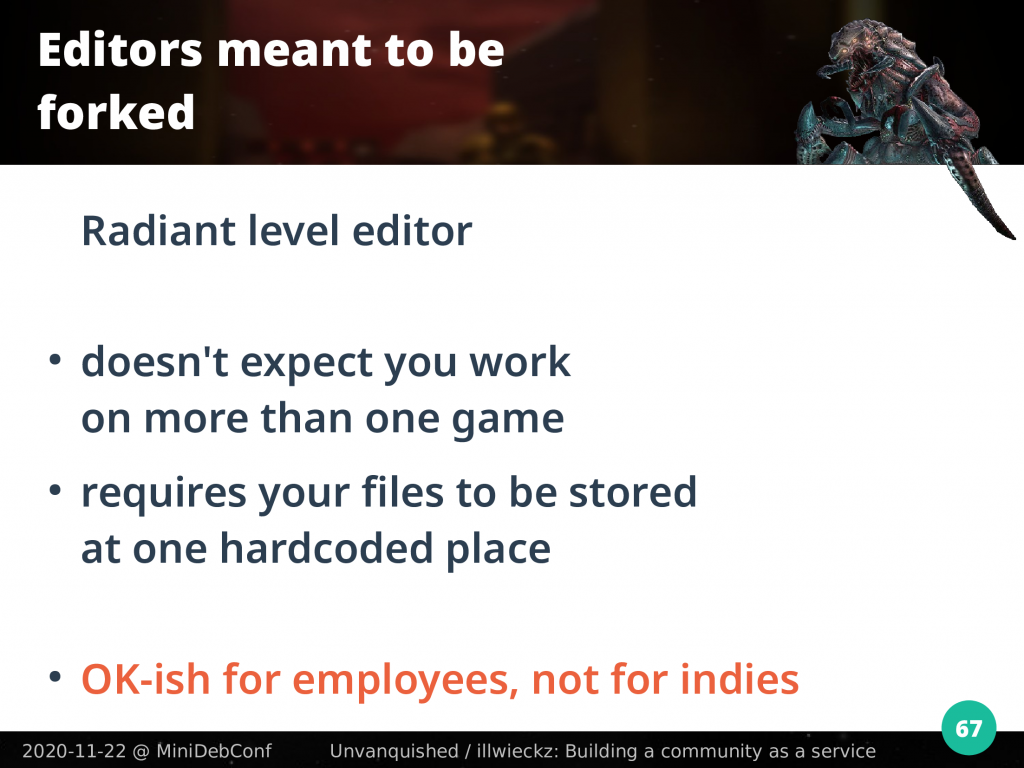

At some point id Software opened the source code for the editor and the map compiler of Quake 3, it was ported to GTK to be multiplatform, and made somewhat multigame. Don’t expect this multigame feature to be straightforward. It is not meant for you to work on multiple games at the same time, multigame only means you don’t have to reinstall the editor when you stop to work on a game to start to work on another.

Even with its multigame enhancement, the Radiant level editor does not expect you to edit levels for one game one day and for another game another day. This may fit the development model of commercial games, but not the contribution model of an open source volunteer community driven by passion and desire.

The editor expects you store files at only one hardcoded place, so you cannot store your files where you want. This is enough if you’re part of a team working on one game only on a shared folder provided by your company, not if you’re an enthusiast.

History also uncovered an interesting id Software behaviour: when they needed a level editor for their Quake Live game, instead of using GtkRadiant 1.5 that was maintained by the community after they opened it, they just went back to an older 1.4 version they kept in-house before republishing and reopening it again. They forked their own code from an old version, and after that people had to choose between two forks because features and games support was split. At this point it looks like id Software did not know how to deal with a community.

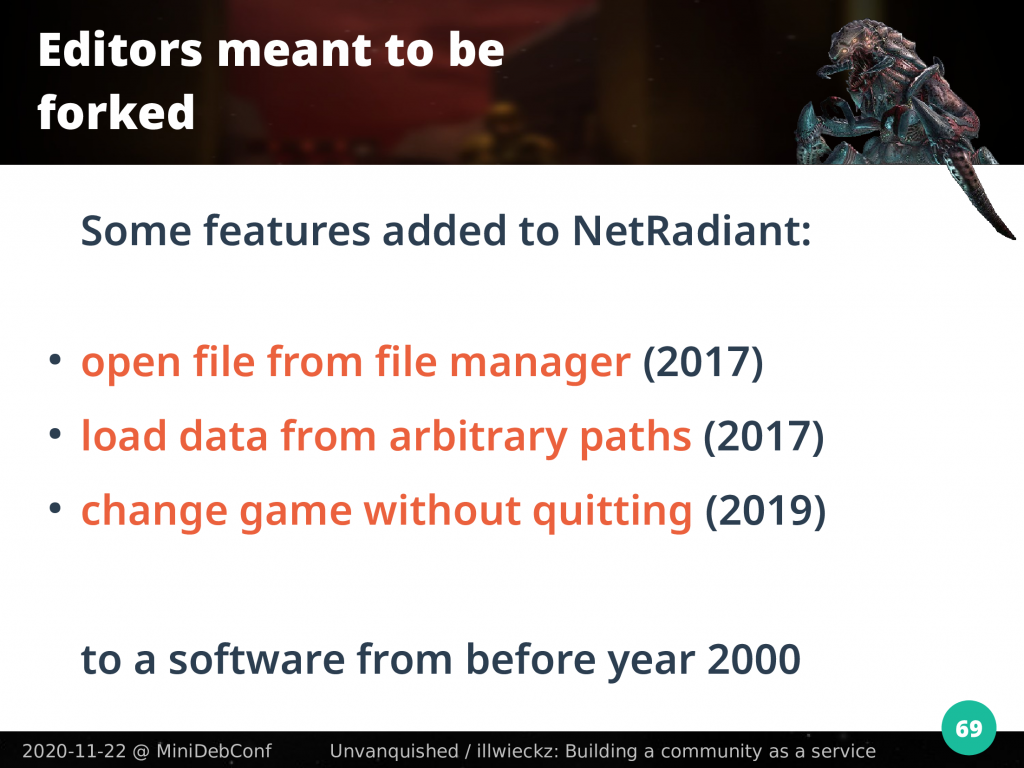

Here is a simple list of features that were added on Unvanquished initiative to the NetRadiant branch, which is the community fork with probably the largest list of supported games today:

- open file from command line and file manager,

that was implemented in 2017. - load game assets from predefined arbitrary paths,

that was implemented in 2017. - change the current game without having to restart the editor yourself, reload the current map with the new game in case you’ve loaded the wrong map with the wrong game,

that was implemented in 2019, last year.

The ability to choose where to store your data and to open a file from the file manager was added in the last three years, for a software designed before year 2000. Why it took so much time is because this software is meant to be forked to target your own specific game hierarchy.

Because this is a Debian talk, I quote a special feature that was added to NetRadiant in 2018: making it possible to install the editor following the File Hierarchy Standard. When you’re expected to ship a fork of the editor with every different game you publish, making the editor able to be installed widely is the least of your concern, and it may even be stupid because all the forks will ship with related tools like map compilers that may share the same binary name but don’t behave the same.

There is already two Radiant forks shipped in Debian, DarkRadiant which is a fork of NetRadiant purposed for the Dark Mod and focusing on Doom 3 based games, and UFORadiant which is a fork of an old branch of DarkRadiant that can only edit assets for the UFO: Alien Invasion game.

On software development and child sacrifice

So, we figured out multiple things:

- id Software technology is very efficient but it’s like every part of it assumes you will fork the whole and never contribute back either mutualize development efforts,

- id Tech engines were meant to be sold and forgotten. It looks to have been designed for only one social relation: the sale,

- map formats, materials, net code and other things are not really meant to be extended, versionized, and in many cases, there are no bits to even detect the formats, while sometimes just one integer would have allowed to parse unknown data.

Almost everything in id Tech engines is designed to ship a game that will kill the previous one. New development is meant to ship a new incompatible game. The design is thought around that mindset, and then the design induces that mindset again.

Forking a software project is like giving birth to a baby, a fork is as fragile as a newborn: it cannot do anything else by itself other than dying. If you’re not able to give enough care and attention to the newborn, the baby will die. Same for forks.

The development workflow of id Software at the time looked like child sacrifice: every time a new game had to be developed, the previous child was killed to redirect resources and life support on the new one. Unlike other game engine developers like Epic or Valve, id Software never took the risk to let its child reach adolescence and become an adult.

They didn’t ship reusable engine builds, editors were not truly reusable, they did not build a community around the tools like if it was a product by itself, they did not build a software distribution system so they never had to solve the related problems, they did not build a data distribution system so they did not have to solve problems about format incompatibilities and file conflicts.

At some point in game development with id Tech based code, you’ll notice that to solve some problems you not only need to extend your engine but to fork your own game. While working on Unvanquished I may have seen this situation like 5 or 6 times.

In some way we can measure the impressive genius of John Carmack on the fact its technical skills kept id Software alive for so many years despite so many marketing mistakes, strategic misses and opportunity losses, preventing their own game communities to grow up with them.

With such a retrospective, what looks weird is to discover that because the code was dead, I mean killed, it was possible to open it. So on one hand we got free and open source code, but on the other hand this code was meant to be dead and to produce zombies.

Also, the less you get contributions back, the easier it is for your legal department to clear up everything for an open source release. So, it’s like it was more possible for id Software code source to be opened because the workflow was more closed than others. This is a bit weird to figure out.

In 2020, no one game developer is looking for an engine that requires to be forked and maintained alone. For Dæmon one objective is to make it possible for a game developer to just reuse an existing build, let’s say the one we do for Unvanquished, and package it with their own game code and assets. This is not entirely done yet, but we want it to be done.

Code induced mindset

Quake 3 engine comes with a really nice feature called PK3 VFS, it’s a special virtual file system making it really easy to extend game data. But it’s not designed for modding. This can be used for modding, but it’s designed to allow the game to receive updates coming from one source: the seller.

The design does not prevent the player’s game from being broken by any random custom file from any random server. So we extended it by creating the DPK VFS in a way it makes possible to only load a pre-defined selection of assets instead of loading everything.

That’s not enough to fix some issues and there are some other features to implement, but you’ll see the difference between designing the format to allow the seller to update the game, and to design the format while taking in account unknown random people will update your own game.

That minor change induces a new mindset. Because packages now have a base name and a standardized version string (reusing Debian’s version sorting), it becomes natural to think about packages as libraries. Artists can do textures libraries and models libraries, they can have their own release cycle and mappers can then create levels using this or that texture or model library version.

The change we did is so minor that if you just revert the file extension to the one expected by Quake 3, a 20 years old build can load the data.

But that very minor change means a lot of things for a community, each member being able to develop its own craftsmanship and relations to others, being a supplier of another one or relying on suppliers, this is called an economy.

We’ve talked about the BSP file format, it embeds some pictures that are precomputed light and shadow textures. Only one storage format was supported and only one picture size was allowed, and no bit describes the format, only the number of pictures was variable. Years ago some people had the good idea to make the engine able to load such textures from outside this file. This allowed new sizes, new picture formats including compressed one.

This simple example shows how the first design requires games to fork the format to create incompatible variants if someone needs to store higher resolution textures, while the second design allows any engine to add any format without introducing incompatibilities to the BSP file specification itself.

id Tech code followed the modern linear consumption pattern: production, consumption, trash. Our efforts at Unvanquished is to recreate a cycle. And to recreate this cycle, we have to fix the design inducing the mindset of the linear pattern.

On open source code and sharing human communities

Dave Airlie from Red Hat published a blog article on this topic while I was preparing this talk. While talking about graphics stack instead of game engines, he said:

There is a big difference between open source released and open source developed projects in terms of sustainability and community.In theory it might be possible to produce such a stack with the benefits of open source development model, however most vendors seem to fail at this. They see open source as a release model, they develop internally and shovel the results over the fence into a github repo every X weeks, […] but they never expend the time building projects or communities around them.

He added:

I started radv because AMD had been promising the world an open source Vulkan driver for Linux […]. When it was delivered it was open source released but internally developed. There was no avenue for community participation […]. Compare this to the radv project in Mesa where it allowed Valve to contribute the ACO backend compiler and provide better results than AMD […] could ever have done, with far less investment and manpower.

There are four important things in that quote. First, it is important for companies to spend time building projects and communities around their own projects.

Second, the name of Valve was quoted again. The frontier between bad and good is not between humans but goes through each human being, same for companies and human organizations. While Epic and Valve never made their game engine free and open source, they understood what a community is. On the other hand, while id Software knew what it is to release their code as free and open source, they did not really build communities around them, even closed source communities.

When you look back it looks like companies that were using id Software technologies were more likely to accept that situation given the code was efficient, and for some of them the only social relation may have been the sale.

Rebecca Heineman, the developer of the 3DO port of Doom even said that at the time her company bought the right to port DOOM to that platform, she did not get the source code at first. This was not id Software fault and she did not have a problem getting a copy of the source on a CD by reaching John Carmack directly, but that means even when source code is bought on purpose the idea of a service seems to be optional.

Software design usually reflects the services around it. Of course we can’t expect methodologies from the DOOM era to be on par with actual ones, but when looking at later id Software source codes, they don’t seem to reflect fundamental changes on those methodologies.

The way problems are solved or features implemented usually reflects methodologies and workflows. And reflections of problems faced by communities are hard to notice in id Software code design.

The third important thing is the wording Dave uses in his blog post when talking about the “open source release model”. We can remove the “open” adjective. id Tech softwares seem to have been more built around a source release model than a sharing development effort model, even if proprietary and under non-disclosure agreement.

The fourth important thing is the cost. id Tech softwares seem to only have been designed to solve technical problems, not the social problems about getting contributions back and preventing waste of development resources on a community scale. Resources seems to have been saved at the local level of the company to the detriment of the community.

In the end, such design deeply harms the technical growth of the software itself when it has to survive one man or one company, and it is relying on volunteer contributions.

Building an ecosystem and a community as a service

How to make a project like Unvanquished and the Dæmon engine doing what the Quake 3 engine never really did on the community side and make its way through the id Software hell of the fork mindset?

First, that’s not technical, but human beings need to experience an existing project as an upstream one. For example when you need to add something to Blender, it sounds obvious you have to submit them patches. This is not obvious per se, it’s a knowledge a project has to build up.

People have to hear about organizations and people’s intentions to review and merge their code. People have to hear their code is meant to be a part of a whole. It’s required to build-up visibility on those purposes and intentions.

If you believe it’s obvious that any patch you’ll make for the Linux kernel would better be upstreamed, it means someone succeeded to sow that feeling in your heart. The Android industry reveals this belief is not obvious: despite all efforts done on making the upstream clearly identifiable and code and tools designed for contributions, many companies act like if the Linux kernel was meant to be forked and they implement their features on what is already a Linux cadaver.

A very important thing is to lurk and live within communities, to listen to their needs, notice the growing aspirations, and accompany the births. It’s a bit like probing a market but it’s about being a friend.

We can’t underestimate the importance of being close to communities. I just discovered this month another somewhat alive XreaL-based project. A Kingpin remake over XreaL. I’m among the ones having the deepest knowledge about all those forks but I missed this one for a decade. Less than two weeks after having talked for the first time with one of the remaining hard-dying contributors he helped us to fix an issue for our upcoming release. It took me 10 years to meet this community, I hope we can do more things together.

Sometimes a design can be made better just by talking with others even if they would never use the software. This helps to step back and get a better picture on what could be done. Sometimes we discover the software can do more than our need just by doing the same thing differently. To open doors you have to look at people’s paths.

People underestimate the value of their contributions. Some forks are done by people tinkering for fun or students trying out something out of curiosity. They usually ignore what they do is precious. That may be due to some wrong assumptions coming from the scholar system making them think they’re not adults yet or not professional ones and anything they would do is not for real. But any patch that solves a problem is for real. Also while growing up we discover professionals may just be teenagers pretending to be serious… So, no one has to underestimate any contribution value.

Some people just fork while saying “I just want to add this”, assuming the original code is finished and there is nothing to get from it in the future. Closed source software may induce this mindset but for an open source project there is no such thing as a code being finished. Also, they ignore other people just ”want to add this” too.

As we see with the BSP and DPK formats, it’s sometimes possible to redesign things with very little differences in a way it makes it easier to extend the code without having to fork it. Sometimes one little change can reveal the idea to fork an entire code base to just tweak a picture size is a waste, while previously such a fork for such a minor change was made a requirement by design.

For Unvanquished we made it clear the engine is meant to be repurposable, and we got a lot of help from Xonotic people to split trees and make the Dæmon engine a project by itself. Because one of the first services that must be provided to a game developer is a reusable build, every issue that is still preventing this is now properly reported and is expected to be fixed one day.

Another important thing being the editor and tools, with the help of Xonotic again we did a lot of work to make the NetRadiant level editor more accessible to newcomers and improve the workflow in more natural ways.

However, so much effort did not prevent another NetRadiant fork to just appear and people have to choose between which game is supported and features, again. Because this fork is based on an old branch that predated a large revamp of the code, the effort to merge them is high and on a developer salary base, the cost of the amount of work to merge the two branches would be a number with four zeroes if valued in dollars. That would not be the cost of one feature, that would just be the cost of the waste. Making people work together is a really long and difficult road.

What is important to keep in mind is at some point the cost of forking is higher than the cost of contributing back. This can be hard to notice in communities of volunteer people when your own acts does not mean more work for you but more work for others.

We can’t do magic and have to rely on other ones’ will. This is the risk we get by letting a child grow: we can give the direction we think is the good one, but in the end everyone is free.

A service is an act of being of assistance to someone, and the first service everyone gets in life is a community.

Question & Answers

Sahil: Thanks Thomas for the talk, let’s move on to the Q&A, so the first question is:

All of this sounds great to me, but has there been any success in getting the other non-Unvanquished forks onboard?

Thomas: If the question is about the other projectsthat are interested in our engine, no one succeeded yetto make their port on the enginebecause it requires a lot of manpower,so maybe it may happen in the future,for now we don’t know. But anyway this already helped us to, how I can say… for example they fixed bugs or we found bugs we fixed when looking at making their port possible, so in the end if no one game successfully ported to the engine yet, the engine is better thanks to them, thanks to their effort for this port.

Sahil: Cool, next I believe the second question is already been answered, about the green animal.

Thomas: Yes it’s called a granger. There is a lot of stories that were written by some peoples so sometime you may hear the name “Dave”, you can read that name in some of our blog posts for example, making joke of it.

So, it’s called Dave, it’s a granger. It’s a species in Unvanquished that has the role to build the structures so if everything is destroyed in the alien team and there is no granger, the alien team has lost.

Sahil: Cool! We are almost after the time, so thank you Thomas for the amazing talk and all the work you have done, and thanks Jathan for the direction and, enjoy the rest of the MiniDebConf.

Jathan: Thank you Thomas.

Thomas: Bye!

For people wanting to explore the topic a bit more, Karl Jobst just reminded me in a recent video¹ the latest official remake of DOOM by id Software was made on the Unity engine,

1. a third party engine while they were the company pushing new engines at the time,

2. yet again acting like if any contribution coming back from the community does not exist.

Also, you may be interested in diving a bit in stories of some DOOM ports like the one shipped with later games like the Xbox Doom 3 or the Doom 3 BFG edition. I haven’t heard if those would have merged contributions from the community but I really doubt about it since they would have to deal with the GPL license covering code written by other people than them, so unfortunately it’s expected the easier for them would have been to just fork again an old version of their internal tree that have never been touched by the community. Yet again with id Software living their live while the community was living another one.

¯\_(ツ)_/¯

¹ https://www.youtube.com/watch?v=_XY1D_r6YVk

Here is a French translation of this article:

🇫🇷 https://linuxfr.org/news/batir-une-communaute-comme-un-service 🙂